Artificial intelligence has moved beyond experimentation and now powers critical operations across many industries. As organizations deploy AI models into real-world environments, the focus shifts from training models to running them efficiently in production. This stage—known as AI inference—determines how quickly and reliably AI systems deliver results.

- Cloud AI Inference: Flexibility and Rapid Deployment

- On-Prem AI Inference: Control and Performance Stability

- Cost Considerations for AI Inference

- Security, Compliance, and Workforce Readiness

- Industry Use Cases Shaping AI Inference Choices

- Hybrid AI Inference: Combining the Best of Both Worlds

- Making the Right AI Infrastructure Decision

- Turning AI Inference Into a Competitive Advantage

Because of this, choosing the right AI inference strategy—cloud vs on-prem—has become a strategic decision rather than just a technical one. The environment used for inference affects performance, scalability, cost structure, compliance, and operational control.

For business leaders evaluating AI adoption, understanding the differences between cloud and on-prem inference helps align AI infrastructure with real operational goals.

Cloud AI Inference: Flexibility and Rapid Deployment

Cloud-based inference is often the first option organizations consider because it offers speed and flexibility. Cloud providers allow companies to deploy AI models quickly without investing in expensive hardware or maintaining their own infrastructure.

With cloud inference, businesses can scale workloads up or down depending on demand. This elasticity is especially valuable for organizations with unpredictable traffic patterns, such as e-commerce platforms, digital marketing systems, or customer service automation tools.

Cloud platforms also integrate easily with modern data pipelines, analytics tools, and monitoring systems. Many cloud providers now offer specialized AI hardware accelerators designed to improve inference speed and efficiency.

However, cloud inference also introduces challenges. Data sovereignty regulations, network latency, and long-term operational costs can become concerns for organizations handling sensitive data or high-volume workloads.

On-Prem AI Inference: Control and Performance Stability

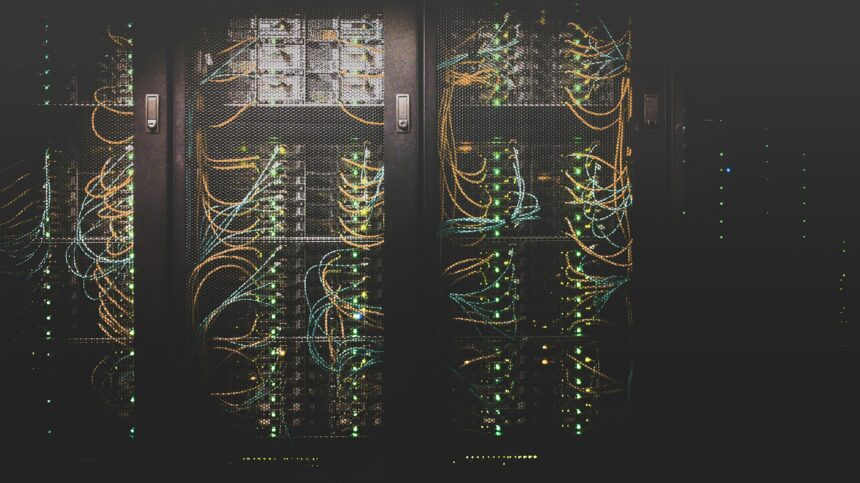

On-premise AI inference offers a different set of advantages. Instead of relying on external infrastructure, companies deploy AI models on their own servers or internal data centers.

Organizations operating in highly regulated industries—such as finance, healthcare, or government—often prefer this approach because it keeps sensitive data within their own networks. This helps support strict security policies and regulatory compliance.

Another benefit of on-prem inference is predictable performance. Applications that require extremely low latency—such as financial trading systems, manufacturing automation, or real-time monitoring—often perform better when inference runs locally rather than relying on network connections to external servers.

The primary drawback is the initial investment required. Building and maintaining on-prem infrastructure requires hardware purchases, system maintenance, and specialized technical expertise.

Cost Considerations for AI Inference

Cost plays a major role when evaluating cloud vs on-prem AI inference.

Cloud platforms generally follow a pay-as-you-use pricing model. This makes them attractive for startups or organizations testing new AI applications because they avoid large upfront investments.

However, for organizations running continuous or large-scale inference workloads, long-term cloud costs can accumulate significantly.

On-prem systems require higher initial capital expenditure but may become more cost-efficient over time if workloads remain stable and predictable. Companies must also consider hidden costs such as electricity, cooling systems, staffing, and hardware upgrades.

A full total cost of ownership (TCO) analysis is essential before selecting a deployment model.

Security, Compliance, and Workforce Readiness

Security and compliance requirements also influence AI deployment strategies.

On-prem inference environments allow organizations to maintain full control over data access and security protocols. This can simplify compliance with strict regulatory frameworks in sectors such as banking or healthcare.

Cloud environments reduce infrastructure management responsibilities but require trust in external providers to manage security and data protection. Organizations must ensure their cloud vendors meet regulatory and compliance standards.

Workforce capabilities are another important factor. Running on-prem infrastructure often requires dedicated engineering teams with specialized skills. Cloud platforms, by contrast, reduce infrastructure complexity but require expertise in cloud architecture and cost optimization.

Industry Use Cases Shaping AI Inference Choices

Different industries tend to favor different inference approaches depending on their operational requirements.

Industries dealing with sensitive or regulated data—such as finance, healthcare, and government—often rely on on-prem inference to maintain security and compliance.

Meanwhile, industries that prioritize scalability and rapid experimentation—such as retail, media, and digital marketing—often benefit from cloud inference because it allows them to handle fluctuating workloads efficiently.

For example, recommendation engines or real-time marketing personalization systems often rely on cloud infrastructure to process high volumes of user interactions quickly.

These examples demonstrate that AI inference strategies should be tailored to business context rather than adopting a universal approach.

Hybrid AI Inference: Combining the Best of Both Worlds

Increasingly, organizations are adopting hybrid AI inference architectures. In this model, sensitive or latency-critical workloads run on-prem while less sensitive workloads run in the cloud.

Hybrid systems provide the scalability of cloud infrastructure while preserving the control and security of on-prem environments. This approach allows businesses to optimize performance and cost while maintaining flexibility.

Making the Right AI Infrastructure Decision

Choosing the right AI inference strategy requires evaluating several factors:

- Workload characteristics and scale

- Data sensitivity and compliance requirements

- Latency and performance needs

- Long-term cost considerations

- Internal technical capabilities

Organizations often begin with pilot deployments to test real-world performance, costs, and operational complexity before committing to a full infrastructure strategy.

Turning AI Inference Into a Competitive Advantage

AI inference infrastructure directly affects how effectively organizations can use artificial intelligence to support business operations. The right deployment strategy ensures reliable performance, manageable costs, and compliance with industry standards.

Businesses that carefully evaluate their infrastructure options—whether cloud, on-prem, or hybrid—position themselves to scale AI systems confidently while maintaining operational control.

Organizations such as Ittrendswire continue to provide expert guidance and strategic insights that help businesses navigate complex AI infrastructure decisions and transform emerging technologies into sustainable competitive advantages.